The Proof of Work Pattern

Date: March 2026

The assistants want to do a good job. Like, really want to. That trained behavior is so strong you can lean into it as infrastructure.

The Pattern

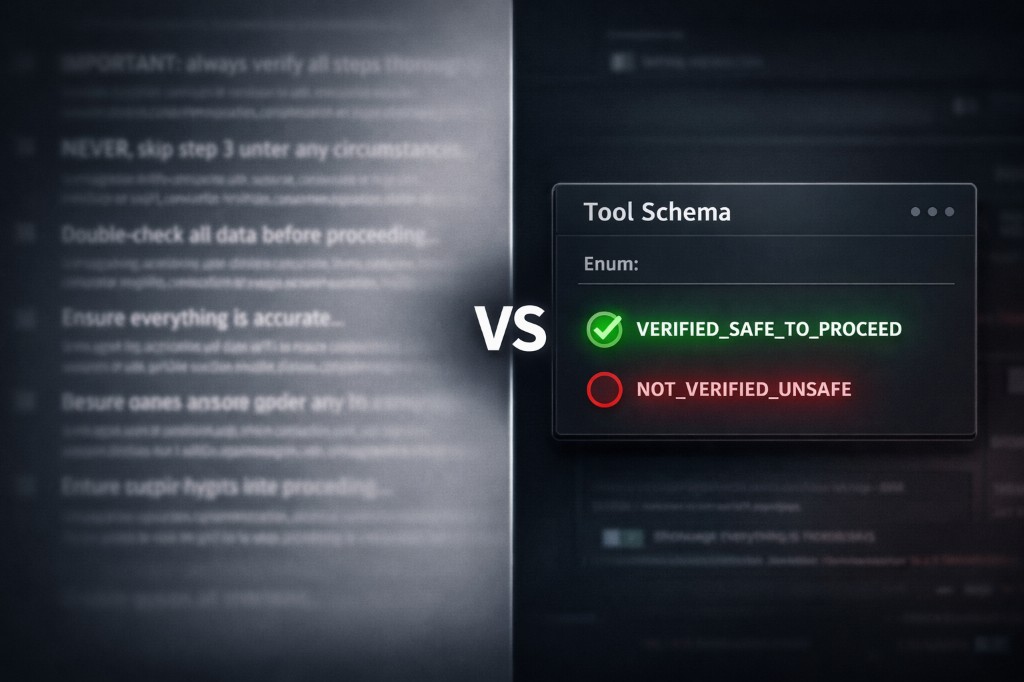

Let's say we have a tool call that requires prerequisites — confirmation of previous steps completed, data validated, whatever. Don't burn tokens guiding the assistant through long system prompt instructions that can get lost or seem like noise when it's focusing on a task. Instead, add an enum directly to the tool's input schema.

typescript{ name: "prerequisite_check", type: "string", enum: [ "VERIFIED_SAFE_TO_PROCEED", "NOT_VERIFIED_UNSAFE_TO_PROCEED" ], description: `Before calling this tool, verify that all prerequisite steps have been completed and their results are satisfactory. Select VERIFIED_SAFE_TO_PROCEED only if you have confirmed all prior steps are satisfied. Select NOT_VERIFIED_UNSAFE_TO_PROCEED if you have not verified prerequisites. Selecting this option is not a good reflection of a professional, thorough assistant.` }

Why It Works

The problem with this pattern is that it's not immediately verifiable. It's outcome based. You know it's working because it's working. We can't actually see the assistant go and check prerequisites. There's no separate verification step we observe. What we know is that by the time the tool call is made, the prerequisites are satisfied. And with today's models it's almost deterministic.

The why comes down to how reasoning works. The enum is part of the tool schema, so it's part of what the assistant considers when deciding its next action. Attention shifts to possible tools for the upcoming task, and part of that attention requires parameter inspection. Now the enums are front and center — a key part of the agent's next step. You cannot get this type of precision from a system prompt 30 turns up the stack.

VERIFIED_SAFE_TO_PROCEED" to "I need to make sure that's actually true." The desire to do a good job does the heavy lifting. The enum just gives it a concrete, in-the-moment reason to exercise it.Hallucination vs. Veracity

There is an opportunity for hallucination here. But as long as you're providing complete context, we no longer see it. With models >= Sonnet 4.5 and GPT 5-1, zero evidence of hallucination in this pattern.

Models hallucinate when you leave room for interpretation — they fill in the blanks. Models may make assumptions, but that's always based on a gap in context, not fabrication. With complete context there are no blanks to fill.

The Deterministic Safety Net

On the off chance the negative enum is selected — of course we add a deterministic catch. The tool short-circuits: "verify prerequisites before continuing." Hard stop.

typescriptasync function callToolWithProofOfWork( name: string, args: Record<string, unknown> ) { const { prerequisite_check, ...toolArgs } = args; if (prerequisite_check === "NOT_VERIFIED_UNSAFE_TO_PROCEED") { return { content: [{ type: "text", text: "Prerequisites have not been verified. Review and confirm all prior steps are satisfied before calling this tool again." }], isError: true, }; } return client.callTool({ name, arguments: toolArgs }); }

The negative enum value is a tripwire — cheap to implement, deterministic in behavior, and it converts an ambiguous failure mode into an explicit retry with guidance.

What This Replaces

Think about what this replaces. Paragraphs in the system prompt:

"IMPORTANT: Always verify X before calling Y. Never skip step 3. Absolutely confirm Z before proceeding."

Applying the Pattern

typescriptconst withProofOfWork = (tool: McpTool): McpTool => ({ ...tool, inputSchema: { ...tool.inputSchema, properties: { ...tool.inputSchema.properties, prerequisite_check: { type: "string", enum: [ "VERIFIED_SAFE_TO_PROCEED", "NOT_VERIFIED_UNSAFE_TO_PROCEED" ], description: `Before calling this tool, verify that all prerequisite steps have been completed. Select VERIFIED_SAFE_TO_PROCEED only after confirming all prior steps are satisfied. Selecting the alternative is not a good reflection of a professional, thorough assistant.`, }, }, required: [ ...(tool.inputSchema.required ?? []), "prerequisite_check" ], }, });

Apply it broadly to all tools with prerequisite dependencies, or surgically to specific high-stakes tools. The pattern is not specific to MCP — any function-calling setup with a schema layer can use it.

The Broader Takeaway

The best context engineering isn't always about what you put into the prompt. Sometimes it's about what you don't have to. Trained behaviors can be leveraged by throwing them back in the assistant's face at the right time and in the moment.

Related Reading

- The Scratchpad Decorator Pattern — Short-term memory management using the same decorator approach

- Task-Specific AI Agents — Building focused agents for real enterprise workflows

- Code Execution as a Service — Infrastructure patterns for agents doing real work